This isn't about whether AI is "killing" SEO. The real question is how it's fundamentally reshaping content discovery—and what publishers must do to remain visible. The shift is from ranking for clicks to being retrieved and cited by AI systems. The content signals that matter have changed dramatically, and publishers who don't adapt risk becoming invisible in an AI-first search landscape.

TLDR

- AI search engines retrieve, synthesise, and cite content rather than just ranking links

- Zero-click searches now dominate — only 360 clicks reach the open web per 1,000 Google searches in the US

- Authority signals, structured formatting, and topical depth now determine AI citation-worthiness — keyword density alone no longer moves the needle

- E-E-A-T, schema markup, and clean semantic HTML are the baseline for getting cited in AI-generated answers

- Track AI citations and brand mentions across ChatGPT, Perplexity, and Google AI Overviews — SERP rankings alone no longer tell the full story

How Generative AI Is Reshaping Content Discovery

Generative AI search tools operate fundamentally differently than traditional search engines. Instead of ranking websites and displaying blue links, platforms like Google AI Overviews, ChatGPT, and Perplexity scan indexed content, synthesise information from multiple sources, and generate answers directly on the page, typically with citations linking back to original sources.

This introduces what researchers call the "citation economy." Being cited in an AI-generated answer can drive more qualified traffic than holding the traditional #1 ranking position. Research from Microsoft Clarity shows LLM-referred visitors convert at 1.66% for signups versus just 0.15% from traditional search. The traffic volume is lower, but intent and quality are measurably higher — the numbers make the case on their own.

The Zero-Click Search Problem

For publishers, zero-click searches represent a direct revenue threat. When AI answers queries directly:

- 59.7% of EU Google searches end without a click

- Only 360 of every 1,000 searches in the US result in traffic to non-Google-owned, ad-free websites

- Nearly 50% of mobile searches end the browsing session entirely

Organic CTR has plummeted 61% for queries displaying AI Overviews, according to September 2025 data from Seer Interactive. BrightEdge found that while search impressions increased 49% year-over-year, CTR dropped 30% overall.

The implication is clear: fewer clicks don't mean fewer opportunities — they mean the selection criteria for what gets cited matters more than ever.

How AI Systems Determine What to Cite

Generative AI tools are trained on massive web corpora, but not all content is treated equally. AI systems favour:

- Fresh, timestamped content with recent statistics — ChatGPT and Perplexity cite content that is 25.7% fresher on average than traditional organic results

- Authoritative sources with established credibility and verifiable claims

- Structured, scannable formatting that enables easy passage extraction

- Original data and insights rather than rehashed information

Google AI Overviews is the notable exception: it cites content that is 16 days older on average than traditional organic results, suggesting its retrieval logic weights authority over recency.

Generative Engine Optimisation (GEO): A New Discipline for Publishers

GEO is the process of structuring and positioning content to appear in AI-generated responses. It's distinct from traditional SEO, which targets algorithm-driven link rankings, and from Answer Engine Optimisation (AEO), which focuses on appearing in featured snippets.

Academic research from Princeton and IIT Delhi confirmed GEO as a measurable discipline. Their study of 10,000 queries found that GEO methods increased website visibility in AI responses by up to 40%. The top-performing strategies were:

- Citing sources from credible, authoritative publications

- Adding quotations from recognised experts

- Replacing qualitative claims with quantitative data

Keyword stuffing proved ineffective—and in some cases actively harmful—for AI retrieval.

How GEO Differs from Traditional SEO

| Aspect | Traditional SEO | GEO |

|---|---|---|

| Goal | Rank first to drive clicks | Be cited and referenced in AI-generated answers |

| Optimisation Target | Page-level ranking signals | Passage-level retrieval and synthesis |

| Key Signals | Keywords, backlinks, domain authority | E-E-A-T, citations, original data, structured formatting |

| Measurement | SERP position, organic traffic | AI citation frequency, referral quality, brand mentions |

Dropping traditional SEO entirely to chase GEO would be a mistake — organic rankings still drive the majority of referral traffic for most publishers. The practical challenge is running both in parallel: maintaining keyword and backlink fundamentals while restructuring content for passage-level AI retrieval.

From Rankings to Retrieval: The New Search Visibility Model

Traditional SEO targeted specific keyword rankings. You optimised for "best CMS for publishers," and if you ranked #1, you won the traffic. AI search introduces retrieval—where content is pulled into responses for queries it was never directly optimised for, based on topical relevance and contextual understanding.

Passage-Level Extraction Replaces Page-Level Ranking

AI systems break content into passages or "chunks" for extraction. 47% of AI Overview citations come from pages ranking outside the top 5 organic positions. Most AI-selected passages fall in the 134-167 word range.

This means:

- Every section must function as a standalone, independently understandable snippet

- Subheadings should mirror real user queries

- Introductory sentences must directly answer the question

- Context cannot depend on earlier paragraphs

Getting passage structure right is the micro-level work. The macro-level strategy—building topical authority—determines whether AI systems treat your domain as a reliable source in the first place.

Topical Authority Clusters Signal Domain Expertise

AI systems reward sites demonstrating breadth and depth across a subject area. The pillar page + cluster content model, where a comprehensive pillar page links to detailed supporting articles, signals to AI that your domain is a reliable source across a topic.

For a financial publisher, this might look like:

- Pillar page: "Complete Guide to Mutual Fund Investing in India"

- Cluster content: "ELSS vs. Equity Funds: Tax Implications," "How to Read a Mutual Fund Fact Sheet," "SIP vs. Lump Sum: Which Strategy Works Best?"

Internal linking between these pieces reinforces topical authority and helps AI systems map your content's semantic relationships.

Freshness Signals Matter—But Not for Google AI Overviews

Ahrefs analysed 17 million AI citations across seven platforms and found AI assistants cite content that is one full year (368 days) newer than traditional organic results. ChatGPT and Perplexity appear to intentionally order citations by age, placing the newest content first.

However, Google AI Overviews is the exception—it cites content 16 days older than organic results on average. Publishers should prioritise content refresh workflows for ChatGPT and Perplexity visibility, but understand that Google AIO may still surface evergreen authority pieces.

The E-E-A-T Factor in AI-Driven Rankings

E-E-A-T (Experience, Expertise, Authoritativeness, Trustworthiness) shapes both traditional search rankings and AI citation decisions. The reason is straightforward: AI systems are trained to favour sources they can verify — which puts credibility signals at the centre of any content visibility strategy.

What E-E-A-T Looks Like in Practice

- Experience: First-person reporting, case studies, original research

- Expertise: Named authors with credentials and bios

- Authoritativeness: Citations from recognised publications, industry awards

- Trustworthiness: Fact-checking, corrections policies, verifiable claims

Research from Yext analysing 17.2 million AI citations found that healthcare shows the narrowest model divergence—all AI systems converge on authoritative sources. Official websites generate 4.31x citation occurrences per URL compared to user-generated content.

That citation gap widens further for YMYL (Your Money or Your Life) topics — health, finance, and safety — where AI models apply the strictest source filters. Publishers in BFSI and healthcare should prioritise:

- Author bylines with verifiable credentials

- Editorial oversight and fact-checking processes

- Publication and update dates clearly displayed

- Citations to primary sources and research

The Content Signals That Make AI Systems Cite You

AI systems don't cite content at random — they retrieve passages that are easy to extract, verify, and attribute. Getting cited consistently comes down to three things: how your content is structured, how credible it appears, and how original it is.

Structure Content for Chunking-Friendly Extraction

AI systems extract standalone passages. To optimise for this:

- Lead with direct answers at the start of each section

- Use scannable formatting: bullet points, numbered lists, subheadings

- Write self-contained passages that don't require prior context

- Keep sections to 150-250 words with clear topic sentences

Good example:

### What Is Generative Engine Optimisation?

GEO is the process of structuring content to appear in AI-generated responses. Unlike traditional SEO, which targets ranked link results, GEO focuses on passage-level retrieval by AI systems like ChatGPT and Perplexity.

Bad example:

### GEO

As mentioned earlier, this is an important concept. It relates to the broader changes in search we've been discussing. Publishers should consider implementing it.

Implement Schema Markup as a Trust Signal

Structured data helps AI systems understand, categorise, and surface content accurately. Publishers should implement:

- Article schema for all editorial content

- FAQ schema for question-and-answer sections

- HowTo schema for step-by-step guides

- Organisation schema for company information

Schema serves as the foundation for Answer Engine Optimisation (AEO) and increases the probability of accurate citation.

Prioritise Original Data and Unique Insights

Structure gets you in the door — but original content is what earns the citation. Google's "information gain" patent measures content originality by comparing new information against what's already indexed. AI systems follow the same logic: rehashed content gets passed over.

The Princeton/IIT Delhi GEO study confirmed this directly — statistics addition (replacing qualitative discussion with quantitative data) ranked among the top three citation-boosting strategies. Content types that perform best include:

- Original reporting with primary source quotes

- Proprietary statistics or survey data

- First-person expert perspectives on industry-specific topics

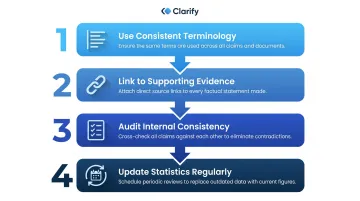

Maintain Consistent, Verifiable Claims

AI systems cross-reference information across sources before citing. To increase retrieval probability, follow these steps in order:

- Use consistent terminology throughout your content — terminology shifts confuse retrieval models

- Link to supporting evidence and credible external sources

- Audit internal consistency across related articles on the same topic

- Update statistics and claims whenever new data supersedes existing figures

Technical & Structural Optimisation for AI Search Discoverability

AI crawlers behave differently than Googlebot. They fetch raw HTML and won't execute JavaScript, making technical optimisation mandatory for visibility.

AI Crawler User Agents and Their Limitations

Major AI crawlers include:

| User Agent | Purpose |

|---|---|

| GPTBot | Crawls content for OpenAI training models |

| OAI-SearchBot | Surfaces websites in ChatGPT search results |

| ClaudeBot | Collects content for Anthropic training |

| PerplexityBot | Indexes content for Perplexity search |

None of these crawlers render JavaScript. They function like basic curl commands, fetching only server-rendered HTML. Sites relying on client-side rendering (CSR) appear as empty shells to AI crawlers.

Publishers must ensure:

- Server-side rendering or static site generation

- Clean semantic HTML with proper heading hierarchy

- Robots.txt allows AI user agents (unless you explicitly want to block them)

- No critical content hidden behind JavaScript execution

Sitemap Hygiene for High-Volume Publishers

Keeping sitemap.xml updated ensures new content is discovered and indexed quickly by both search engines and AI systems. For publishers posting dozens of articles daily, this is operationally critical.

Best practices:

- Auto-generate sitemaps on content publication

- Include publication and last-modified dates

- Segment sitemaps by content type (news, features, evergreen)

- Submit updated sitemaps to Google Search Console regularly

Platform Infrastructure and Core Web Vitals

Technical performance directly impacts crawlability and user experience. Publive's platform achieves a 98% Core Web Vitals pass rate, one of the highest among leading DXPs per HTTP Archive 2025 data, ensuring pages load and render correctly for both human users and AI crawlers.

Poor Core Web Vitals create a direct risk for AI discoverability. Publishers on underperforming platforms may be excluded from AI citations due to:

- Slow load times that exceed crawler timeout thresholds

- Layout shifts that signal unstable or incomplete page rendering

- Rendering failures that return incomplete HTML to AI crawlers

Tracking What Matters: Measuring AI-Era Content Performance

Traditional rank tracking alone no longer reflects true search visibility. Publishers need to supplement SERP position monitoring with tracking of AI citations.

New Metrics for AI-Era Content Health

Track these signals regularly:

- Brand mention frequency in AI responses across ChatGPT, Perplexity, and Google AI Overviews

- Citation quality (is your content being accurately represented?)

- Referral traffic from AI-cited URLs

- Engagement quality of visitors arriving from AI-generated answers vs. traditional search

Tools for AI citation tracking include:

- Otterly.ai — Tracks brand mentions across ChatGPT, Perplexity, and Google AI Overviews

- Semrush AI Visibility Checker — Monitors brand visibility, prompts, and competitor presence

- Yext Scout — Tracks AI search visibility and competitors in real time

- BrightEdge — Enterprise SEO platform with AI Overview tracking and share-of-voice reporting

Query AI Platforms Manually for Topic Coverage

Automated tools capture volume — manual queries reveal context. Run your core topics through ChatGPT, Perplexity, and Google AI Overviews directly, and track:

- Which competitors are cited

- What sources AI systems prioritise

- How your content is referenced (if at all)

- Accuracy of citations and context

This qualitative analysis reveals content gaps and citation opportunities that automated tools may miss.

Use Integrated Analytics Platforms

Publive's analytics platform connects GA4 and GSC data into a single Golden Signals Dashboard, giving publishers a clear view of which content earns discovery, referral traffic, and meaningful engagement across both traditional and AI-driven channels. That visibility tells you not just what's performing — but why, and where to focus next.

Frequently Asked Questions

How do AI Overviews affect organic traffic for publishers?

AI Overviews often answer queries directly on the SERP, reducing click-throughs to source websites. However, content cited in AI Overviews can generate highly qualified referral traffic—making citation-worthiness the new traffic strategy rather than ranking position alone.

What is Generative Engine Optimisation (GEO) and how is it different from traditional SEO?

GEO focuses on making content retrievable and citable by AI-generated answer systems like ChatGPT or Google AI Overviews, while traditional SEO targets algorithm-ranked link results. Both strategies now need to coexist for comprehensive search visibility.

Does Google penalise AI-generated content?

Google penalises low-quality, unhelpful content regardless of origin—AI-generated or human-written. Content demonstrating original insight, accuracy, and genuine value can rank well even if AI-assisted, provided human oversight ensures quality and E-E-A-T signals are present.

How can publishers optimise content to be cited by ChatGPT or Perplexity?

Several factors improve your chances of being cited by AI systems:

- Place clear, direct answers at the start of each section

- Use structured formatting: bullets, subheadings, and numbered lists

- Add schema markup and original data or proprietary insights

- Include strong E-E-A-T signals: author credentials and cited sources

- Ensure robots.txt permits access for AI crawlers

What role does E-E-A-T play in AI-driven content rankings?

AI systems heavily weight Experience, Expertise, Authoritativeness, and Trustworthiness when selecting sources to cite. Publishers should include author credentials, cite verifiable evidence, and publish content that demonstrates first-hand knowledge or genuine expert analysis.

How do I measure whether my content is appearing in AI-generated search results?

Conduct manual queries on ChatGPT, Perplexity, and Google AI Overviews for key topics, set up brand alerts using tools like Otterly.ai or Semrush AI Visibility Checker, and track referral traffic from AI-cited URLs in addition to standard rank tracking.