This article isn't about whether your team should adopt generative AI. It's about how to do it right—covering use cases, governance frameworks, optimization for AI discovery, and platform selection that consolidates tools rather than creating new silos.

The teams winning with GenAI aren't just writing faster. They're restructuring workflows, governance models, and content infrastructure around it.

TLDR

- GenAI is reshaping enterprise content workflows across creation, personalisation, SEO, and distribution

- Governance gaps create real risk — only 25% of organisations have implemented AI governance programmes

- Optimising for GEO (Generative Engine Optimisation) is now critical as 55% of searches show AI Overviews

- The 10-20-70 rule explains why 50% of GenAI projects fail — technology gets funded; people and process don't

- AI-first platforms replace disconnected tools with a unified system — faster output, fewer vendors, tighter governance

What Is Generative AI Content Management?

Generative AI content management integrates large language models, image generators, and translation engines into the complete content lifecycle—from ideation and creation to distribution, versioning, and governance—within an enterprise CMS environment.

This isn't about basic AI writing assistants. Enterprise-grade GenAI content management requires:

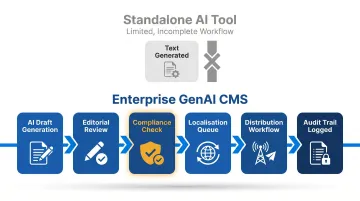

- Structured workflows that embed AI at specific stages without bypassing approval gates

- Metadata management tracking AI-generated content, version history, and human approvals

- Consistent brand voice across formats through multi-channel publishing pipelines

- Role-based access that gates AI capabilities by user permission level

- Human-in-the-loop review at every publication checkpoint

The practical difference: a standalone AI tool generates text. An enterprise GenAI CMS controls where that text goes next—routing it through editorial review, compliance checks, localisation queues, and distribution workflows, with a full audit trail at each stage.

Key Use Cases of Generative AI for Enterprise Content Teams

AI-Powered Content Creation and Repurposing

Enterprise teams use GenAI to draft long-form articles, generate social media variants, write product descriptions, and repurpose existing content across formats (blog → newsletter → short-form video scripts).

McKinsey estimates generative AI could increase marketing function productivity by 5% to 15% of total marketing spending. The technology can automate tasks consuming 20% of knowledge workers' time—roughly one day per work week spent searching for and gathering information.

Among enterprise marketers using AI content tools, 84% report improved productivity, 76% cite better operational efficiency, and 64% note streamlined creative capabilities.

The quality caveat: 12% of enterprise marketers report content quality actually decreased after AI adoption. 34% see no change in performance despite AI use. Productivity gains don't guarantee audience impact.

Personalization at Scale

GenAI analyses behavioural and demographic data to generate content variants tailored to individual audience segments. For a financial institution, this means serving different content to retail investors versus high-net-worth individuals, without manually creating every permutation.

Personalization drives 10-15% revenue lift, with fast-growing companies generating 40% more revenue from personalization than slower peers. Yet 71% of consumers expect it, and 76% get frustrated when it doesn't happen.

The enterprise gap is significant. While 91% of enterprise marketers personalize content, 58% describe their efforts as "basic" (1-2 channels). Only 1% achieve comprehensive, full-journey AI-driven personalization.

SEO and Metadata Automation

GenAI can auto-generate meta descriptions, alt text, tags, and schema markup at scale, reducing manual SEO overhead while improving discoverability. This capability feeds into GEO (Generative Engine Optimization) readiness, which we'll cover later.

Only 22% of sites deploying schema markup pass Google's Rich Results Test, despite 71% attempting implementation. The gap between deployment and compliance reveals where automation fails without quality control.

Manual metadata work carries hidden costs. Knowledge workers lose 45 minutes daily toggling between tools, requiring 9.5 minutes to regain productive flow after each switch. Automating these tasks reduces this overhead—and matters even more when teams are managing content across multiple languages and markets.

Multilingual and Multi-Market Content

Enterprise teams serving regional or global audiences use GenAI translation and localisation to produce content in multiple languages without adding headcount at the same rate.

86% of enterprise respondents report AI accelerates content creation. Yet the same study reveals AI is simultaneously slowing localisation due to required rework. 21% of localisation budgets are currently spent correcting AI-generated content.

Faster creation and stricter quality governance are two demands that pull in opposite directions. For enterprises serving India's diverse linguistic markets across Hindi, Tamil, Marathi, Bengali, and Gujarati, translation speed means little if cultural nuance and legal accuracy are compromised in the process.

Content Workflow Acceleration

Platforms with native AI integration, rather than copy-paste between external tools, let editors generate drafts, variants, and visual assets without leaving their authoring environment.

Publive, for instance, embeds content generation, social media distribution, and push notifications directly inside the authoring environment. Content teams working within a single unified platform report up to 60% faster output, largely because editors no longer lose time moving content between disconnected tools.

Building a Governance Framework Before You Scale

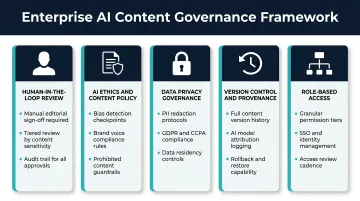

Define What "Human-in-the-Loop" Means

Governance starts with establishing clear review checkpoints where human editors verify AI-generated content for accuracy, brand voice, and factual integrity before publication.

For regulated industries like finance and healthcare, this is non-negotiable. AI hallucination rates in medical/health content average 18.2% across all models and 4.5% even among top-tier models. Financial data hallucinations average 13.8% across all models.

Your governance framework should specify:

- Which content types require human review (all vs. low-risk)

- Who conducts review (editors, compliance, legal)

- What review criteria apply (fact-checking, brand voice, regulatory compliance)

- What happens when AI output fails review (reject, edit, escalate)

Establish an AI Ethics and Content Policy

Enterprise teams need documented guidelines covering disclosure of AI-generated content, prohibited use cases, bias-checking procedures, and IP/copyright considerations.

Only 25% of organisations have fully implemented AI governance programmes despite near-universal AI adoption. Just 27% of boards have formally incorporated AI governance into committee charters.

The cost of this gap: 47% of organisations experienced at least one negative GenAI consequence, including compliance exceptions, quality errors, or data exposure.

Your policy should address:

- When and how to disclose AI-generated content to audiences

- Prohibited use cases (e.g., no AI-generated legal or medical advice without expert review)

- Bias detection and mitigation procedures

- Copyright and IP ownership for AI-assisted content

Data Privacy and Training Data Governance

Feeding proprietary or customer data into third-party AI tools without proper data processing agreements creates liability.

For Indian enterprises, compliance deadlines are urgent. India's DPDP Act and Rules (notified November 13, 2025) impose 72-hour breach reporting requirements, mandatory consent management, and 18-month compliance deadlines for data fiduciaries.

Organisations processing EU audience data face GDPR obligations — privacy by design, data processing impact assessments (DPIAs), and Standard Contractual Clauses (SCCs) for international transfers.

Practical steps to close the gap:

- Map which data enters which AI model and under what conditions

- Require contracts with AI vendors to specify data handling, training exclusions, and deletion rights

Knowing exactly what data your AI tools touch is the foundation for the next control layer: tracking what content they produce.

Version Control and Content Provenance

Audit trails, rollback capability, and brand accountability all depend on knowing which content was AI-assisted, when it was generated, who approved it, and what version is currently live.

Gartner predicts that by 2028, 50% of organisations will implement zero-trust data governance due to the proliferation of unverified AI-generated data.

Your CMS should log:

- AI generation timestamp and model used

- Human editor who reviewed and approved

- Content modifications post-generation

- Version history with rollback capability

Role-Based Access and Approval Workflows

Governance at scale requires assigning AI tool access by role: writers generate drafts, editors approve, and compliance teams review regulated content before it reaches the publish queue. A BFSI content team, for instance, might allow junior writers to use AI for first drafts of market commentary — but a compliance editor must clear the piece before it goes live. Without that gate, a single user with AI access can push unreviewed financial guidance directly to your audience, with no record of who approved it or why.

How to Optimize Content for Generative AI Discovery

Understand the Shift from SEO to GEO

55% of Google searches now show AI Overviews, and 58% of searches end without clicks. As AI-powered search engines—Google's SGE, Perplexity, ChatGPT search—increasingly surface answers directly in results, enterprise content must be structured to be cited by these systems.

This means prioritising authority signals, clear factual structure, and semantic depth over keyword density alone. 40% of sources cited in AI Overviews rank in positions 11-20 on standard SERPs, not just the top 10. Position alone no longer determines citability.

The stakes are concrete: some websites report 20–40% traffic declines since AI Overviews launched, while others see gains by structuring content for AI citability. GEO optimisation is no longer optional for enterprise teams — it's a baseline requirement.

Structure Content for Machine Readability

AI search engines parse content into smaller, structured pieces rather than evaluating entire pages. Microsoft's guidance on AI search optimisation emphasises that content must be "fresh, authoritative, structured, and semantically clear."

Specific structural choices that improve GenAI citability:

- Clear H2/H3 hierarchies that break content into logical sections

- FAQ-style sections with direct question-and-answer formats

- Concise definitions early in articles

- Schema markup (JSON-LD) for entities, events, and relationships

- Semantic HTML that signals content meaning to machines

RAG-based AI systems retrieve content in chunks — so how you structure each section directly determines whether your content gets cited or skipped.

Build Topical Authority and Semantic Richness

AI search engines favour content ecosystems over individual articles. Rather than chasing isolated high-volume posts, enterprise teams should build interconnected content clusters with consistent entity coverage across a topic.

88% of AI Overviews cite 3 or more sources; only 1% cite a single source. Short AI Overviews average 5 citations — longer responses pull from 28. Depth and breadth of topical coverage both matter.

To build that coverage:

- Organise content clusters around core topics, not just keywords

- Link related articles internally to signal semantic relationships

- Maintain consistent entity coverage across the cluster (same terminology, same definitions)

Keep Content Fresh and Accurate

Topical authority erodes quickly if your content cluster contains stale statistics or contradictory claims — GenAI retrieval systems are sensitive to both. GenAI retrieval systems penalise stale or contradictory content.

Enterprise teams must establish content refresh schedules, sunset policies for outdated articles, and ensure factual consistency across the content library. This requires robust CMS infrastructure, not just editorial discipline. Your platform should support:

- Scheduled content audits and refresh workflows

- Automated flagging of outdated statistics or references

- Version control that tracks updates and maintains accuracy

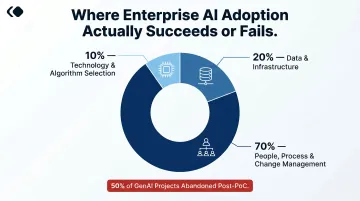

The 10-20-70 Rule: Planning Your Enterprise AI Adoption

The 10-20-70 rule, published by BCG (Boston Consulting Group), allocates roughly 10% of AI adoption effort to algorithms and technology selection, 20% to data and infrastructure, and 70% to people, processes, and change management.

The implication: most enterprises overinvest in tool selection and underinvest in organisational readiness.

Failure data validates this framework. At least 50% of generative AI projects were abandoned after proof of concept by end of 2025, due to poor data quality, inadequate risk controls, escalating costs, or unclear business value. Only 5% of organisations have reaped substantial financial gains from AI.

Apply It Practically to Enterprise Content Teams

Spend most of your adoption energy on:

- Retrain content teams to treat AI as a collaborator, not a replacement

- Redefine editor roles around strategic judgment and brand storytelling while AI handles high-volume tasks

- Build approval workflows that embed human review without creating bottlenecks

- Cultivate cultural readiness so AI is trusted and used consistently across the team

Technology selection is only 10% of the equation. The other 90% — how your team works, learns, and adapts — is where AI adoption actually wins or stalls.

Address the FOMO vs. FOGI Tension

Enterprise leaders simultaneously fear missing out on productivity gains (FOMO) and fear getting in due to trust, compliance, and output quality concerns (FOGI—Fear of Getting In).

The 10-20-70 rule cuts through this tension directly: trust comes from process, not from the tool itself. Clear governance frameworks, defined roles, and structured change management give teams a safe path to scale AI without losing control over quality or compliance.

What to Look for in an AI-First Content Management Platform

Native AI Integration vs. Bolt-On Tools

Platforms with AI embedded in the authoring workflow let editors generate drafts, variants, and assets without leaving their interface. This reduces context-switching, enforces brand consistency, and creates a cleaner audit trail.

Bolt-on tools require exporting content to external services, reintroducing it, and manually tracking provenance—all friction points that slow teams down and introduce governance gaps.

Consolidated Platform vs. Tool Sprawl

Enterprise content teams often operate with fragmented stacks: separate CMS, DAM, SEO tool, distribution platform, and analytics. The average enterprise maintains 91 marketing technology tools, yet only 42-56% of capabilities are utilised.

The operational cost of this fragmentation is measurable:

- Workers lose 45 minutes daily toggling between tools

- Each context switch takes 9.5 minutes to recover from

- Only 53.3% of senior marketers report positive ROI from their martech investments

Platform consolidation has become a strategic priority as a result: 60% of marketing leaders are actively seeking to consolidate their technology stacks to improve efficiency.

Publive takes this approach by consolidating content creation, AI-powered distribution, push notifications, analytics (GA4/GSC integration), and Core Web Vitals optimisation into a single unified system. This eliminates tool sprawl, reduces operational costs, and lets AI work across the full content lifecycle with shared context.

GEO Readiness and Performance Standards

A consolidated stack only delivers its full value when the underlying platform is built for discoverability. The right system should include structured data support, fast page performance, and compliance-ready infrastructure (WCAG, DPDP).

Publive's architecture is designed for AI search and GEO discoverability, with 98% of sites passing Core Web Vitals benchmarks, the highest pass rate among leading DXPs. Fast page performance (LCP under 2.5s, CLS of 0.05, INP under 200ms) directly impacts both traditional SEO and AI-powered search citability.

For Indian enterprises in BFSI and digital media, DPDP readiness is urgent. Platforms processing Indian user data need to meet consent management, breach reporting, and data governance obligations before the 18-month deadline.

Frequently Asked Questions

What is generative AI content management?

Generative AI content management uses AI models to assist in creating, organizing, personalising, and distributing content within enterprise CMS workflows. Human oversight ensures quality, accuracy, and brand consistency throughout the process.

How do you optimise content for generative AI?

Start with clear content hierarchies and semantic depth, then build topical authority through content clusters and schema markup. Keeping content accurate and regularly updated ensures AI retrieval systems can surface and cite it reliably.

What is the 10-20-70 rule for AI?

The 10-20-70 rule allocates 10% of AI adoption effort to technology, 20% to data and infrastructure, and 70% to people and process change management. For enterprise content teams, this means investing heavily in training, workflow redesign, and editorial governance before rolling out new AI tools.

What are the biggest risks of using generative AI in enterprise content teams?

Key risks include AI hallucinations (18.2% average in medical content, 13.8% in financial data), data privacy violations, brand voice inconsistency, and lack of governance.

Can generative AI replace human content creators in enterprise teams?

Generative AI handles repetitive, high-volume tasks — drafts, summaries, metadata, translations — freeing editors and strategists to focus on editorial judgment, brand storytelling, and creative decisions that require human experience. The role shifts, not disappears.